Mike Long’s (2007) book Problems in SLA is divided into three parts: Theory, Research, and Practice.

Part One

In chapter 1, “Second Language Acquisition Theories”, Long reviews some of the many approaches to theory construction in SLA and suggests that the plethora of SLA theories obstructs progress. In chapter 2, Long suggests that culling is required, and he uses Laudan’s “problem-solving” framework (e.g., Laudan, 1996) as the basis for an evaluation process. Briefly, theories can be evaluated by asking how many empirical problems they explain, giving priority to problems of greater significance, or weight. Long suggests that among the weightiest problems in SLA are age differences, individual variation, cross-linguistic influence, autonomous interlanguage syntax, and interlanguage variation.

Part Two

Chapter 3 deals with “Age Differences and the Sensitive Periods Controversy in SLA”. Why do the vast majority of adults fail to achieve native-like proficiency in a second language? Long argues that maturational constraints, or “sensitive periods” explains this problem. Chapter 4 deals with recasts. As we know, recasts are a controversial issue, but they play an important role in Long’s focus on form. Long gives his usual careful review of the literature on research so far and concludes that recasts facilitate acquisition “without interrupting the flow of conversation and participants’ focus on message content” (p. 94).

Part Three

Chapter 5 “Texts, Tasks and the Advanced Learner”, discusses Long’s version of TBLT. Long claims that his TBLT is superior to “the traditional grammatical syllabus and accompanying methodology, or what I call “focus on forms” (p. 121) because it respects, rather than contradicts, robust findings in SLA. Long gives particular attention to the methodological principles of “focus on form” (reactive attention to form while attention is on communication), and “elaborated input” (use elaborated rather than simplified texts). Finally, chapter 6, “SLA: Breaking the Siege”, responds to three “broad accusations made against SLA research in recent years”. The charges are “sociolinguistics naiveté, modernism, and irrelevance for language teaching”. Long finishes with suggestions on how the siege might be broken.

Discussion

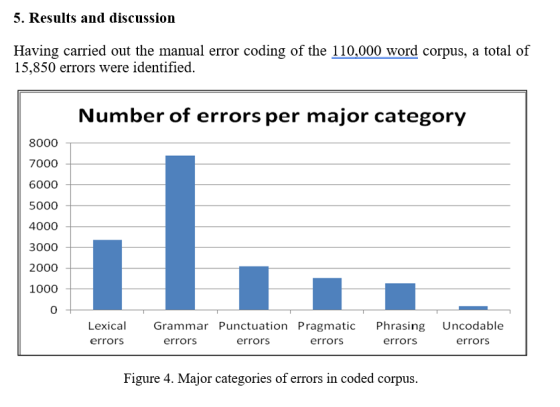

The book packs a powerful punch. The references section is impressive (as usual); chapters 3, 4, and 5 are still very informative; and chapters 1, 2, and 6 are still a cogently argued case for a critical rationalist approach to SLA research and its application to ELT. A slight niggle is that Long’s discussion of theory construction and evaluation in chapters 1 and 2 is not entirely consistent with the rest of the book. There’s a possible conflict between chapters 3 and 4 – the claim that SLA is maturationally constrained (a view usually associated with “nativist” theories) sits uneasily with the claims made for recasts – and the absence of any mention of the interaction hypothesis adds a bit more doubt about exactly what Long himself regards as the best theory of SLA. Such doubts are dealt with in his (2015) book Second Language Acquisition and Task-Based Language Teaching.

Chapter 3 describes “A Cognitive-Interactionist Theory of Instructed Second Language Acquisition (ISLA”. Note that this is a theory of Instructed SLA, where, Long says, “necessity and sufficiency are less important than efficiency. Provision of negative feedback, for example, might eventually turn out not to be a relevant factor in a theory of SLA, as argued persuasively by Schwartz (1993), but its empirical track record, to date, as a facilitator of rate and, arguably, level of ultimate attainment makes it a legitimate component – in fact, a key component – of a theory of ISLA”. Long claims that his “embryonic” theory addresses empirical problems concerning (i) success and failure in adult SLA, (ii) processes in IL development, and (iii) effects and non-effects of instruction. The explanation is based on an emergentist, or usage-based (UB) theory of language acquisition:

A plausible usage-based account of (L1 and L2) language acquisition (see, e.g., N.C. Ellis 2007a,b, 2008c, 2012; Goldberg & Casenhiser 2008; Robinson & Ellis 2008; Tomasello 2003), with implicit learning playing a major role, begins with initially chunk-learned constructions being acquired during receptive or productive communication, the greater processability of the more frequent ones suggesting a strong role for associative learning from usage. Based on their frequency in the constructions, exemplar-based regularities and prototypical morphological, syntactic, and other patterns – [Noun stem-PL], [Base verb form-Past], [Adj Noun], [Aux Adv Verb], and so on – are then induced and abstracted away from the original chunk-learned cases, forming the basis for attraction, i.e., recognition of the same rule-like patterns in new cases (feed-fed, lead-led, sink-sank-sunk, drink-drank-drunk, etc.), and for creative language use (Long, 2015, pp 48-49).

I personally don’t find Ellis’ usage-based account plausible, and I still can’t quite get used to the fact that Long went along with it. I console myself with the fact that Long didn’t join the Douglas Fir group, and that he retained his commitment to the importance of sensitive periods and interlanguage development. Furthermore, warts and all, I think Long’s book on TBLT is the best book on ELT ever written. Having said all that, I want to go back to Long’s concern for theory construction in SLA and suggest that they don’t justify his siding with Nick Ellis “and the UB (not UG) hordes”.

Laudan’s aim was to reply to criticism of Popper’s “naïve” falsification criterion. He tried to improve on the work of Lakatos (who had the same aim of defending Popper’s falsification criteron) by suggesting, firstly, that science is to do with problem-solving, and secondly, that science makes progress by evolving research traditions. This concern with research traditions is at the heart of Laudan’s endeavor, and I don’t think Long sufficiently recognizes its importance. Laundan talks about research traditions in science; Long wants to talk about theories of SLA. In my opinion, Laudan gives a poor account of research traditions in science, and Long makes poor use of Laudan’s criteria for theory evaluation.

Laudan says that the overall problem-solving effectiveness of a theory is determined by assessing the number and importance of empirical problems which the theory solves and deducting therefrom the number and importance of the anomalies and conceptual problems which the theory generates (Laudan, 1978: 68). In a later work, Laudan (1996) develops his “problem-solving” approach and offers a taxonomy. He suggests, first, that we separate empirical from conceptual problems, and that as far as empirical problems are concerned, we distinguish between “potential problems, solved problems and anomalous problems.” ‘Potential problems’ constitute what we take to be the case about the world, but for which there is as yet no explanation. ‘Solved problems’ are that class of putatively germane claims about the world which have been solved by some viable theory or another. ‘Anomalous problems’ are actual problems which rival theories solve but which are not solved by the theory in question (Laudan, 1996: 79). As for conceptual problems, Laudan lists four problems that can affect any theory.

Laudan claims that this “taxonomy” helps in the relative assessment of rival theories, while remaining faithful to the view that many different theories in a given domain might well have different things to offer the research effort. Laudan argues that it is rational to choose the most progressive research tradition, where “most progressive” means the maximum problem-solving effectiveness. Note first that Laudan refers to the most progressive research tradition, not theory. But the main problem is how we assess the problem-solving effectiveness of rival research traditions. In the end, we will be forced to compare different theories belonging to different research traditions, and then, how does one count the number of empirical problems solved by a theory? For example, is the “problem of the poverty of the stimulus” to be counted as one problem or several? In principle the number of problems could be infinite. And how are we to assign different weightings to theories? How much weight should we give to Schmidt”s Noticing Hypothesis, and how much to Long’s Interaction Hypothesis, for example? Laudan’s inability to suggest how we might go about enumerating the seven types of problems in his taxonomy that are dealt with by any given research tradition (itself not a clearly-defined term), or how these problems might then be weighted, seems a fatal weakness in his account.

Even if we ignore this weakness, I don’t think Long makes a persuasive case for the UB research tradition he favours. In the field of linguistics, the nativist, UG-led research tradition has an impressive record; I can’t think of any way that the UB theories of N.C. Ellis, Goldberg & Casenhiser, Robinson & Ellis, and Tomasello can be made to score higher than the UG-based theories of Chomsky (1959), White (1989), Carroll (2001), and Hawkins (2001), for example. I’ve argued elsewhere in this blog against the emergentist view, usually citing Eubank and Gregg (2002) and Gregg (2003) Let me just summarise one point Gregg makes here.

For emergentists, SLA is a matter of associative learning: on the basis of sufficiently frequent pairings of two elements in the environment, one abstracts to a general association between the two elements. The environment provides all the necessary cues for these associations to form. Gregg (2003) gives this example from Ellis: ‘in the input sentence “The boy loves the parrots,” the cues are: preverbal positioning (boy before loves), verb agreement morphology (loves agrees in number with boy rather than parrots), sentence initial positioning and the use of the article the)’ (1998: 653). Gregg asks ‘In what sense are these ‘cues’ cues, and in what sense does the environment provide them?’ The environment can only provide perceptual information, for example, the sounds of the utterance and the order in which they are made. Thus, in order for ‘boy before loves’ to be a cue that subject comes before verb, the learner must already have the concepts SUBJECT and VERB. According to Ellis, if SUBJECT is one of the learner’s concepts, that concept must emerge from the input. But how can it? How can the learner come to know about subjects or agreement in English? What ‘cues’ are there are in the environment for us to learn the concept SUBJECT so that later on we can use that concept to abstract SVO from other input sentences? As Gregg (2003, p. 120) puts it:

Not only is it unclear how ‘preverbal position’ could be associated with ‘clausal subject’ or ‘agent of verb’, it is also not clear that these should be associated (Gibson, 1992): For instance, in sentences like ‘The mother of the boy loves the parrots’ or ‘The policeman who followed the boy loves the parrots,’ ‘the boy’ is preverbal but is neither subject nor agent. In short, there is no reason to think that ‘comes before the verb’ is going to be useful information for a learner or a hearer, in the absence of knowledge of syntactic structure. But once again, the emergentist owes us an explanation of how syntactic structure can be induced from perceptual information in the input.

Likewise, it does not make sense to say that learners “notice” formal aspects of the language from the input – grammar cannot, by definition, be “noticed” from perceptual information in the environment.

I don’t doubt that Mike would have made short work of these criticisms had I managed to put them to him. I recently asked him if we could discuss Chapter 3 on Skype, but he was already too ill. While there are, in my opinion, almost insurmountable problems for an empiricist, usage-based theory of language learning to overcome, and while it follows that I don’t think Long resolves them, in his (2015) book SLA & TBLT, Long uses Laudan’s “Problems and Explanations” framework to address four problems, rather than present any full theory of SLA. He does so with his usual scholrship, and he is absolutely clear about the most important issue facing us when it comes to designing courses of English for speakers of other languages: learning a new language is “far too large and too complex a task to be handled explicitly” …. “implicit learning remains the default learning mechanism for adults”. You can see his quote in context in this post: Mike Long: Reply to Carroll’s comments on the Interaction Hypothesis.

References

Carroll, S. (2000). Input and evidence: The raw materials of second language. Amsterdam, Benjamins.

Chomsky, N. (1959). Review of B.F. Skinner Verbal behavior. Language 35, 26–8.

Eubank, L. and Gregg, K. R. (2002) News Flash–Hume Still Dead. Studies in Second Language Acquisition, 24, 237-24.

Gregg, K.R. (2003) The state of emergentism in second language acquisition, Second Language Research 19,2, 95–128.

Hawkins, R. (2001). Second language syntax: A generative introduction. Oxford: Blackwell.

Laudan, L. (1978) Progress and its problems: Towards a theory of scientific growth. University of California Press.

Laudan, L. (1996) Beyond positivism and relativism: Theory, method, and evidence. Oxford and New York: Westview Press.

White, L. (1989). Universal Grammar and second language acquisition. Amsterdam: Benjamins.